The Agentic Sprawl Problem

Something shifted in the last year. When I walk into a client engagement now, nobody asks me how to adopt AI. The AI is already there. It's in the IDE, in the CI pipeline, generating pull requests at 2 AM. The conversation has moved from adoption to control.

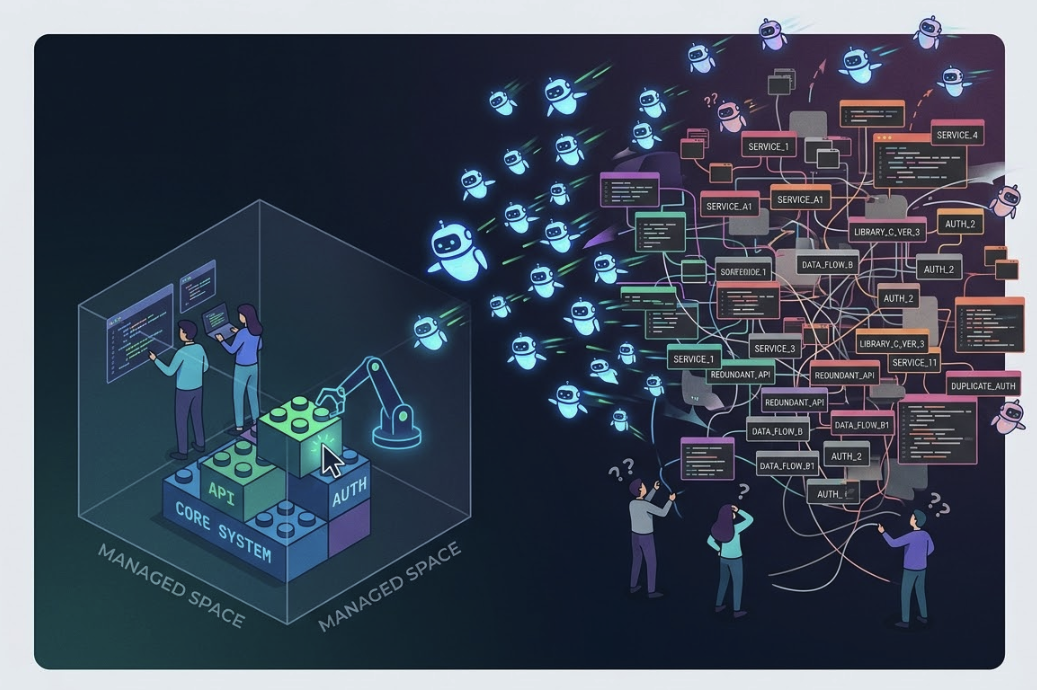

Tools like Claude Code, Cursor, Copilot Workspace, and Devin have made it possible for a single developer to operate with the output of a small team. That's genuinely exciting. But when you multiply that across an engineering org of 30, 80, or 200 people, you get something nobody planned for: an explosion of code, services, and architectural decisions being made at machine speed by agents that don't attend your architecture review meetings.

I'm calling this agentic sprawl, and I think it's going to be one of the defining operational challenges of the next two years.

What sprawl actually looks like

Sprawl doesn't announce itself. It accumulates. A developer uses an AI agent to scaffold a new microservice for a feature that could have been a function in an existing one. Another agent generates a data pipeline that duplicates logic already living in a shared library, but slightly differently. A third writes a perfectly functional authentication wrapper that ignores the one your platform team spent three months building.

None of these are bad decisions in isolation. The code works. The tests pass. The PR looks clean. But zoom out six months and you have 40% more surface area to maintain, three competing patterns for the same problem, and a dependency graph that makes senior engineers wince.

Most of the time, agents write surprisingly decent code. The problem is that they write contextless code. They solve the problem in front of them without understanding the organizational decisions that led to the current architecture. They don't know that your team decided to consolidate around a specific event bus, or that the reason you use a particular ORM has nothing to do with technical merit and everything to do with what your ops team can actually support at 3 AM.

Fleets, skills, and the governance gap

The tooling is evolving fast. Claude Code now has hooks, custom slash commands, MCP servers, and the ability to define skills that shape how agents operate within a codebase. You can configure a CLAUDE.md file that acts as persistent context, giving the agent knowledge of your conventions, your architecture boundaries, and your preferences. Other tools are building similar abstractions.

This is the right direction. But most engineering orgs haven't caught up yet. They're still thinking about AI agents as individual productivity tools, like a faster autocomplete. They haven't started thinking about fleet management: how do you govern dozens or hundreds of agents operating across your codebase simultaneously?

The questions that matter now are operational:

- Who defines the skills and constraints that agents operate under?

- How do you enforce architectural boundaries when an agent can scaffold a new service in 90 seconds?

- What does code review look like when the volume of generated code exceeds your team's ability to review it thoughtfully?

- How do you maintain a coherent system when multiple agents are making concurrent architectural micro-decisions?

- What happens when an agent's context window doesn't include the tribal knowledge that prevents your team from repeating past mistakes?

These aren't hypothetical. I'm seeing every one of them in production environments right now.

Toward managed agency

Slowing down adoption would be a mistake. The productivity gains are real and they compound. Teams that use agentic tools well are shipping faster, with fewer bugs, and with more ambitious scope than they could have attempted a year ago. The answer is to treat agent management the way we learned to treat cloud infrastructure: with deliberate governance, shared conventions, and platform-level guardrails.

1. Codify your architecture as agent context

If your architectural decisions only live in people's heads or in a Confluence page nobody reads, agents will ignore them. Put them where agents actually look. CLAUDE.md files, system prompts, and skill definitions are the new architecture documentation. They need the same care and versioning as your production code, because they directly shape what gets built.

2. Build platform-level boundaries, not just guidelines

An agent that knows it "should" use your shared authentication library is less reliable than an agent operating in a repo where bootstrapping a new auth flow isn't possible without importing from a specific package. Make the right thing the easy thing. Use hooks, pre-commit checks, and CI-level validation to catch architectural drift before it merges, regardless of whether a human or an agent wrote the code.

3. Invest in review infrastructure, not just review culture

Code review doesn't scale linearly. When agent-assisted developers are producing 3-5x the volume of changes, you need tooling that helps reviewers focus on what matters: architectural fit, security implications, and system-level coherence. Automated checks for dependency duplication, pattern consistency, and API contract violations free up human reviewers to think about the things agents can't.

4. Treat agent configuration as a team sport

The developer who configures how agents operate in your codebase is making architectural decisions, whether they realize it or not. The skills you define, the context you provide, and the constraints you set determine the shape of the code that gets generated at scale. This shouldn't be an individual activity. It should be part of your platform engineering practice, reviewed and iterated on like any other shared infrastructure.

5. Measure for coherence, not just velocity

It's easy to celebrate the speed gains. Harder to measure whether your system is becoming more coherent or less. Track service count growth relative to feature growth. Monitor dependency sprawl. Watch for the proliferation of similar-but-slightly-different implementations. The metrics that tell you whether agents are making your system better or just bigger are different from the ones that tell you they're making your team faster.

The opportunity inside the problem

Here's what I find genuinely exciting about this moment: the organizations that figure out agentic governance early are going to have an enormous advantage. The discipline required to manage agents well turns out to be the same discipline that makes engineering organizations excellent. Clear architectural boundaries. Explicit conventions. Automated enforcement. Thoughtful review. These have always been best practices. Agentic AI just makes them non-optional.

The companies I work with that are doing this well share a common trait: they treat AI agents less like developer tools and more like junior engineers who joined the team by the dozen overnight. They need onboarding. They need guardrails. They need architecture context. And they need someone watching the system-level picture while they work.

That system-level view is where most organizations need help. The pace of change simply hasn't left room to step back and design for it. If that sounds familiar, that's exactly the kind of problem we work on at Gradient Methods.